What to know

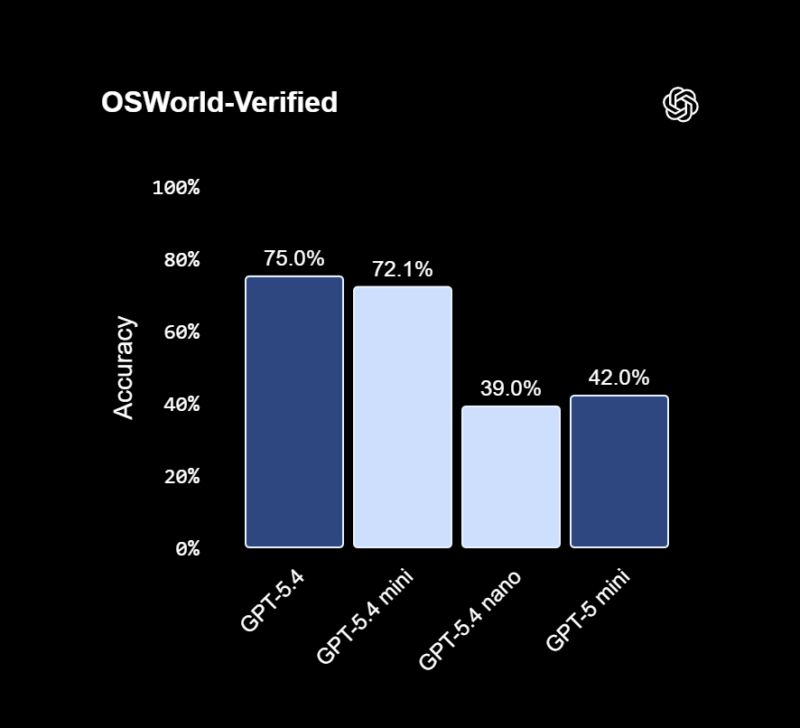

- OpenAI launched GPT-5.4 mini and nano on March 17, 2026

- Mini offers near-flagship performance with faster speed

- Nano is built for ultra-low cost, high-volume tasks

- Both models target developers, automation, and real-time AI use

OpenAI has expanded its latest AI lineup with GPT-5.4 mini and GPT-5.4 nano, two smaller but highly optimized versions of its flagship GPT-5.4 model. You are seeing a clear shift: powerful AI is no longer limited to heavy, expensive systems—it is becoming faster, lighter, and more accessible.

The GPT-5.4 mini model is designed to give you performance close to the full GPT-5.4 experience, but with significantly better speed. In some cases, it can run more than twice as fast as earlier mini versions, while still handling complex reasoning, coding, and multimodal tasks like image understanding.

You can already access it inside ChatGPT, including on lower-tier plans, where it acts as both a primary model and a fallback when usage limits are reached.

At the same time, GPT-5.4 nano takes a different approach. It focuses on extreme efficiency and scale, making it suitable for tasks like classification, data extraction, and automated workflows. Unlike the mini version, nano is mainly available through APIs, where developers can deploy it in high-volume systems at very low cost—starting around $0.20 per million input tokens.

Both models reflect a broader strategy: bringing advanced AI into real-time applications. You can expect better performance in coding assistants, faster automation pipelines, and smoother AI-driven tools that process text, images, and workflows instantly.

What stands out is how OpenAI is positioning these models. Instead of replacing the flagship GPT-5.4, mini and nano are built to work alongside it. You use the full model for deep, complex tasks, while mini and nano handle speed-critical or repetitive workloads. This layered approach helps reduce cost while maintaining strong performance.

For developers and businesses, this means you can now design systems where AI is always-on and scalable, rather than limited by compute or budget constraints. For everyday users, it means faster responses and wider access to advanced features that were previously restricted.

In simple terms, OpenAI is making AI not just smarter—but practical at scale.